LaborMaxxing vs KnowledgeMaxxing: A Marxist Case for Google's AI Strategy

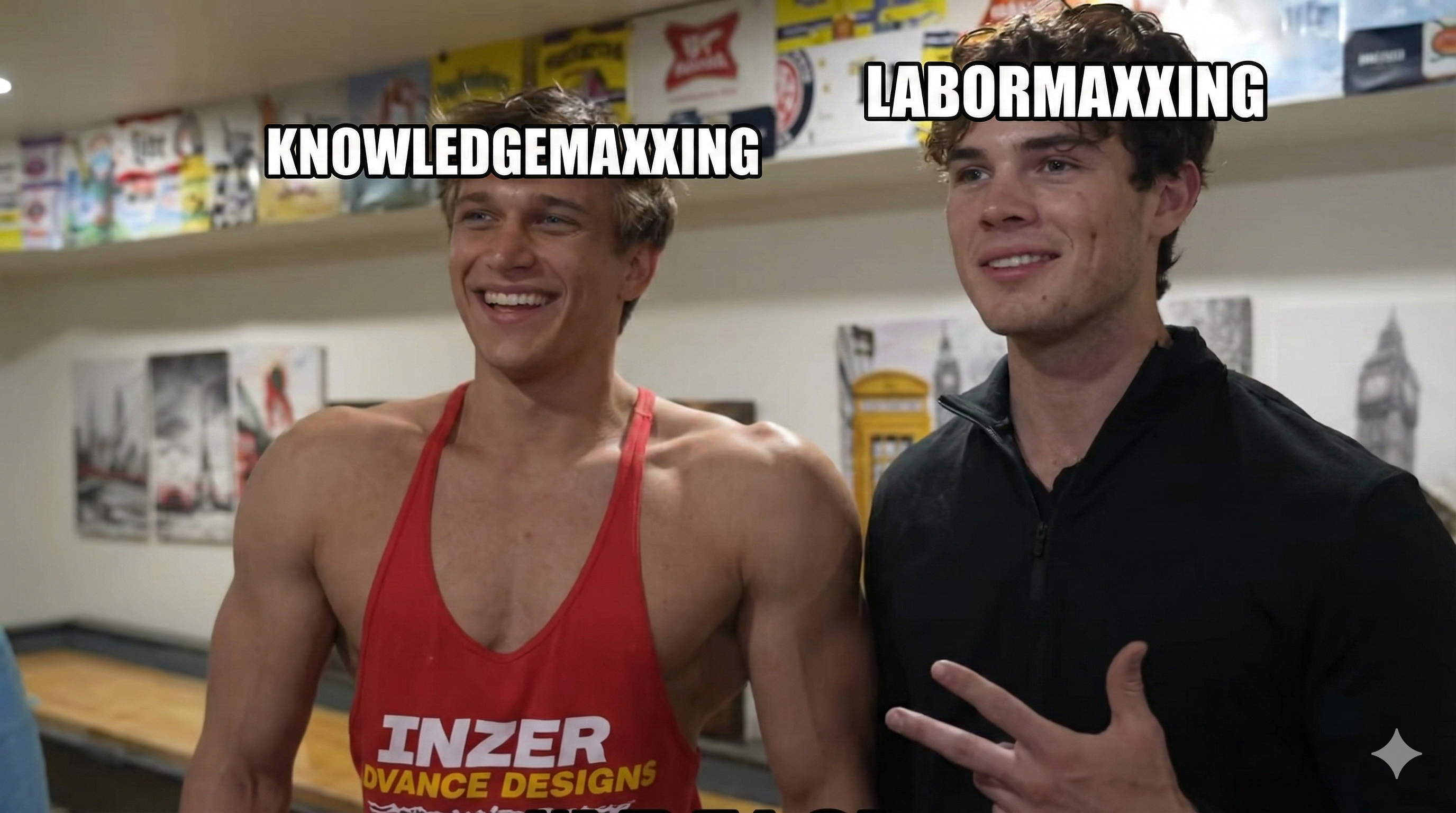

On February 5th, 2026, a streamer named Clavicular took a selfie with a fraternity member at Arizona State University. The photo went viral. 13.5 million views. The verdict from the internet: he got brutally frame mogged. If you don't know what that means, congratulations on touching grass recently. For the rest of us who have been rotmaxxing on the timeline, the "-maxxing" suffix has become the default way to describe going all-in on any particular strategy. Looksmaxxing. Gymmaxxing. Jestermaxxing. It's optimization culture distilled into a single suffix.[1]

So let me introduce two new terms to the lexicon: LaborMaxxing and KnowledgeMaxxing.

Right now, the major AI labs are pursuing two completely different strategies. OpenAI and Anthropic are LaborMaxxing: building AI that replaces human labor. Google DeepMind is KnowledgeMaxxing: building AI that expands human knowledge. One is zero-sum. The other isn't. And the one you root for says a lot about what you think the future should look like.

What is LaborMaxxing?

LaborMaxxing is the strategy of optimizing AI models to do work that humans currently get paid for. Not "assist with" or "augment." Do. The thesis is simple: if an AI agent can write code, manage email, fill out forms, triage support tickets, and navigate software interfaces, then every dollar currently spent on a human doing those tasks is addressable market.

The evidence isn't subtle.

On February 5th, 2026, OpenAI launched Frontier, an enterprise platform explicitly designed so companies can deploy AI agents like human employees. It includes an onboarding process for agents and a feedback loop for improvement, mimicking HR workflows. Bloomberg's headline: "OpenAI Unveils Platform to Help Companies Deploy 'AI Coworkers.'" Initial customers include HP, Intuit, Oracle, Uber. The $20,000/month pricing tier for research agents signals OpenAI believes a single AI agent can produce at least $100K of value monthly. Value that currently goes to human salaries.[2]

Ten days later, OpenAI hired Peter Steinberger, the creator of OpenClaw, the fastest-growing project in GitHub history with 200,000+ stars. OpenClaw is an autonomous AI agent that manages email, handles calendar, browses the web, runs shell commands, and chains tool-use actions together without asking permission at each step. It is, functionally, a digital employee. Sam Altman announced the hire saying: "Peter Steinberger is joining OpenAI to drive the next generation of personal agents... We expect this will quickly become core to our product offerings."[3]

OpenAI's Operator, powered by the Computer-Using Agent model, uses reinforcement learning to interact with graphical interfaces. Clicking, scrolling, typing, managing logins. They are literally training AI to use the same tools humans use. This is the purest possible form of labor substitution.

And Anthropic? Same playbook. Claude Opus 4.6 leads SWE-Bench with a 72.5% score. Claude Code is an agentic coding tool designed to write, test, and ship software autonomously. MCP (Model Context Protocol) is an open standard for connecting AI agents to tools, APIs, and data sources. The entire product surface is oriented toward one thing: making AI capable of doing the work software engineers currently do.[4]

Full disclosure: this blog post is being co-written with Claude Opus 4.6 inside Claude Code. I am using a LaborMaxxing product to write an essay critiquing LaborMaxxing. The tool is doing exactly what it was designed to do: displacing the labor of writing. I'm not going to pretend that isn't funny.

What is KnowledgeMaxxing?

KnowledgeMaxxing is a different game. Instead of building AI that does human work, you build AI that discovers things no human has discovered yet. The target isn't the labor market. It's the frontier of knowledge.

On February 19th, 2026, Google released Gemini 3.1 Pro. The benchmarks are impressive across the board: 77.1% on ARC-AGI-2 (more than double the reasoning of Gemini 3 Pro), 94.3% on GPQA Diamond (graduate-level science), 2887 Elo on LiveCodeBench Pro, and #1 rankings on 12 of 18 tracked benchmarks.[5]

But the benchmark that matters most for this argument is SciCode: a benchmark that evaluates programming for scientific research applications. Gemini 3.1 Pro leads the field at 59.0%. This isn't a coding benchmark in the "write me a React component" sense. This is a benchmark for whether AI can help solve scientific problems. Google is optimizing for this. That's not an accident.

And it's not just benchmarks. Look at the portfolio:

Medicine and drug discovery

- AlphaFold solved the 50-year-old protein folding problem and won the Nobel Prize in Chemistry in 2024. Over 3 million researchers in 190+ countries now use it. AlphaFold 3 extended this to protein-DNA, protein-RNA, and protein-drug interactions.

- Isomorphic Labs, DeepMind's drug discovery spinoff, unveiled IsoDDE in February 2026, doubling AlphaFold 3's accuracy on protein-ligand structure prediction. Scientists called it "effectively an AlphaFold 4." They have $3B in partnerships with Eli Lilly and Novartis targeting aggressive cancers and neurodegenerative diseases, with first clinical trials expected by end of 2026.[7]

- AI Co-Scientist, a multi-agent system on Gemini, generates novel scientific hypotheses. At Stanford, it identified drug repurposing candidates for liver fibrosis. For Acute Myeloid Leukemia, it proposed treatments that were confirmed to inhibit tumor viability in lab tests. For antimicrobial resistance, it predicted resistance mechanisms that matched experimental results before they were published.

- TxGemma, an open-source model family for therapeutics, outperforms prior state-of-the-art on 64 of 66 drug discovery tasks. Any lab in the world can download it for free.

- A 27-billion-parameter biology model built with Yale (C2S-Scale) discovered that combining silmitasertib with low-dose interferon increases cancer antigen presentation by 50%, potentially making "cold" tumors visible to the immune system. This was experimentally validated in living cells.[8]

Mathematics

- Gemini Deep Think scored 35/42 on the 2025 International Mathematical Olympiad, gold-medal standard. It settled a decade-old conjecture in online submodular optimization by engineering a counterexample that proved human intuition false.

- AlphaEvolve broke the record for 4x4 complex matrix multiplication that had stood since Strassen's algorithm in 1969. Across 50 open math problems, it discovered improved solutions 20% of the time.[9]

- FunSearch found new constructions for the cap set problem, which Terence Tao once called his favorite open question.

Materials, climate, and energy

- GNoME discovered 381,000 new stable crystal materials, equivalent to nearly 800 years of accumulated human knowledge. Among them: 528 potential lithium-ion conductors (25x more than all previous studies combined) for next-generation batteries.[10]

- GenCast is more accurate than the world's best traditional weather system on 97.2% of evaluated targets. It generates 15-day ensemble forecasts in 8 minutes on a single TPU. The U.S. National Hurricane Center is evaluating it for real-world forecasting.

- DeepMind partnered with Commonwealth Fusion Systems to apply deep reinforcement learning to plasma control in fusion reactors. Their TORAX simulator enables millions of virtual experiments. SPARC is targeting net-positive fusion energy in late 2026.

- DeepMind announced the UK's first fully automated AI research lab in December 2025, integrating Gemini with robotics to synthesize hundreds of new materials per day, targeting room-temperature superconductors, advanced batteries, and next-gen solar cells.

Biology beyond proteins

- AlphaProteo designs novel protein binders from scratch with 3x to 300x better binding affinities than existing methods. In collaboration with the Francis Crick Institute, its binders for SARS-CoV-2 were confirmed to block infection in human cells.

- AlphaGenome, published in Nature in January 2026, predicts the function of DNA's "dark matter" (non-coding regions) at up to 1 million base pairs at a time. Nearly 3,000 scientists in 160 countries are using it to pinpoint driver mutations in cancer genomes.

None of this replaces a software engineer's job. It cures cancer. Achieves fusion. Discovers superconductors. Decodes the genome. Demis Hassabis said at the India AI Summit in February 2026 that we're approaching "a new golden era of discovery, a new renaissance" defined by "radical abundance." That's a different vocabulary than "AI coworkers" and "onboarding agents."

The Marxist critique: why LaborMaxxing is deflationary

Marx had a term for what happens when capital relentlessly pursues labor displacement: the crisis of overproduction. Every company uses AI to eliminate labor costs. Margins expand. But labor is also demand. Workers are consumers. Kill wages and you kill the purchasing power that sustains the market for your products.

LaborMaxxing is this dynamic on fast-forward. If OpenAI's Frontier can replace a $150K/year knowledge worker with a $20K/month AI agent, that's a 72% cost reduction for the employer. Multiply that across millions of knowledge workers and you get a massive deflationary shock. Wages collapse. Demand contracts. The companies selling AI agents discover that their customers' customers can no longer afford to buy anything. The surplus value extracted by replacing labor has no one left to consume it.

The game theory is ugly too. LaborMaxxing is a prisoner's dilemma. Every individual company is rationally incentivized to adopt AI agents and cut headcount. But when everyone does it simultaneously, the aggregate result is a demand crisis. No single company can afford not to play. The collective outcome is worse than the starting position.

Sam Altman himself acknowledged this at the India AI Impact Summit on February 19th: "There is some real displacement by AI of different kinds of jobs." He's not wrong. He's describing the natural consequence of the strategy his company is pursuing.[6]

The LaborMaxxing playbook treats global wages as total addressable market. The strategy's success is literally measured by how much human compensation it can absorb. That's not a side effect. It's the pitch deck.

Why KnowledgeMaxxing isn't zero-sum

Google's approach works differently.

When Gemini Deep Think settles a decade-old mathematical conjecture, no one loses their job. When AlphaFold predicts a new protein interaction, it doesn't displace a biologist -- it gives biologists a tool they never had. When AI Co-Scientist identifies a drug candidate for liver fibrosis, it creates a treatment pathway that didn't exist yesterday.

KnowledgeMaxxing is positive-sum. New knowledge creates new industries. Penicillin didn't displace workers. It created the pharmaceutical industry. Semiconductors didn't eliminate jobs. They created the technology sector. Knowledge expansion is the one economic activity that consistently grows the pie instead of re-slicing it.

Gemini 3.1 Pro leading SciCode at 59.0% isn't just a benchmark number. It's a directional signal. Google is telling you what they're optimizing for: not "can this model write your code for you" but "can this model help solve problems that no human has solved yet." The 1-million-token context window isn't for ingesting your Jira backlog. It's for ingesting entire research corpora.

Capitalism's blind spot: the common good has no TAM slide

Capitalism is good at allocating resources toward activities that generate private returns. If you can build it, sell it, and capture the margin, capital will find you. LaborMaxxing is a perfect capitalist product. "Pay us X, save Y on headcount." It fits on a slide deck. It survives a board meeting. It has unit economics.

KnowledgeMaxxing does not. Discovering that silmitasertib combined with interferon makes cold tumors visible to the immune system might be one of the most important oncology findings this decade. But there's no SaaS pricing model for it. AlphaGenome helping 3,000 scientists pinpoint cancer mutations doesn't have a monthly recurring revenue line item. GNoME discovering 528 new lithium-ion conductors doesn't have a customer success team.

This is the mismatch. Markets price labor displacement efficiently because the savings are immediate and capturable by a single firm. Markets price knowledge creation terribly because the benefits are diffuse, slow, and accrue to everyone. An economist calls these positive externalities. A Marxist calls it the contradiction between private accumulation and social production.

Capital flows toward LaborMaxxing because the returns are legible. Curing diseases, achieving fusion, discovering superconductors -- huge value to humanity, but illegible value to a quarterly earnings report. The most important work AI could do is the work that capitalism is structurally least equipped to fund.

The numbers make it concrete. OpenAI is valued at $300B. Anthropic at $60B+. These valuations are based on projected revenue from selling AI labor. AlphaFold is used by 3 million researchers and has accelerated drug discovery at every major pharmaceutical company on Earth. What is that worth? No one knows. But Isomorphic Labs, the entity actually trying to monetize it, raised $600M. The market values labor displacement at 500x knowledge creation. That ratio tells you everything about capitalism's priorities and nothing about humanity's.

This isn't new. Public goods have always been under-provisioned by markets. That's why governments fund basic research, why the NIH exists, why CERN was built by a consortium of nations rather than a startup. What's new is the scale. We have tools that could compress decades of scientific discovery into years. And the dominant economic incentive is to use them to automate expense reports.

AlphaFold doesn't have a pricing page. TxGemma is on Hugging Face for free. AlphaGenome has no paywall. Google is giving away tools that advance human health while OpenAI charges $200/month for a chatbot and $20,000/month for an AI coworker. One of these strategies will matter in fifty years.

Why Google can afford to play this game

If KnowledgeMaxxing is so great, why isn't everyone doing it?

Because it doesn't pay the bills directly.

Google can KnowledgeMaxx because they don't need AI to be the product. They need AI to make their existing products better. Search, Ads, Cloud, Android, YouTube, Workspace. Google has 4.6 billion users across its ecosystem. Gemini doesn't need to sell API seats to survive. It needs to make Search more useful, make Workspace stickier, and make Cloud more attractive. The business model is indirect monetization through ecosystem value.

OpenAI and Anthropic don't have this luxury. They are AI-native companies. AI is the product. They need to demonstrate immediate, measurable ROI to enterprise customers to justify their valuations. And the fastest path to measurable ROI is labor displacement. "We replaced three engineers with one Claude Code license" is a story that sells. "We expanded the frontier of human knowledge" is a story that wins Nobel Prizes. Only one of these stories closes deals.

Google can burn cash on fundamental research because the research compounds into ecosystem value over decades. AlphaFold doesn't have a pricing page. It has a Nobel Prize. TxGemma is open-source. The AI for Math Initiative is a partnership with research institutions, not a revenue center. Google can afford to play the infinite game because they already won a finite one.

Not altruism. Strategy. But the externalities happen to be positive for humanity, which is more than you can say for "we automated your job and charged your employer $20K/month for the privilege."

The open-source wildcard

There's a third path that might mog both. Open-source models.

OpenClaw itself is going to an independent foundation. Steinberger open-sourced the most capable autonomous agent framework and it hit 200K GitHub stars before any company could capture it. Meta's Llama. Mistral. DeepSeek. The open-source ecosystem is producing models that compete with closed-source offerings at a fraction of the cost.

If open-source models get good enough fast enough, the LaborMaxxing playbook breaks down because the margin disappears. You can't charge $20K/month for an AI coworker when someone can run an equivalent agent locally for the cost of electricity. The deflationary pressure that LaborMaxxing applies to the labor market would, ironically, apply to the LaborMaxxers themselves.

I don't know which model wins. It might be none of the above. But the open-source option is the one that keeps the LaborMaxxers honest, and it's the one most likely to democratize the KnowledgeMaxxing benefits that Google currently has the unique privilege to pursue.

The case for KnowledgeMaxxing as the better future

I think KnowledgeMaxxing is the better model for the future of humanity. Not because LaborMaxxing won't work -- it will. It's already working. Companies are cutting headcount and citing AI capabilities. The economic incentives are real.

But "it works" and "it's good" are different claims. Asbestos insulation worked. Leaded gasoline worked. The question isn't whether AI can replace human labor. It obviously can. The question is whether that's the best use of these systems.

Curing diseases beats automating email triage. Proving mathematical theorems beats writing boilerplate CRUD endpoints. Discovering new materials beats filling out expense reports. We can point these models at the unknown or we can point them at payroll.

Google isn't doing this out of generosity. They can afford to because they don't need to sell AI-as-labor. But motives aside, the externalities matter. A world where AI accelerates scientific discovery is a world where new possibilities emerge. A world where AI primarily accelerates labor displacement is a world where we fight over a shrinking pie.

The maxxing metaphor lands here too. In the original community, there's softmaxxing (going to the gym, getting a haircut) and hardmaxxing (surgery, starvation, bone-smashing). LaborMaxxing is the hardmaxxing of AI strategy. It produces results. The side effects are the problem.

KnowledgeMaxxing is the softmaxxing play. Slower. Harder to monetize. The gains compound over years, not quarters. But it leaves the world with more than it started with. That's the only kind of mogging that ages well.

Sources

- NBC News - "Mog, maxxing and the other manosphere lingo that has taken over social media" (February 2026). Link

- Bloomberg - "OpenAI Unveils Platform to Help Companies Deploy 'AI Coworkers'" (February 2026). Link

- TechCrunch - "OpenClaw creator Peter Steinberger joins OpenAI" (February 2026). Link

- Anthropic - Claude Opus 4 and Claude Code. Link

- Google Blog - "Gemini 3.1 Pro: A smarter model for your most complex tasks" (February 2026). Link

- Fortune - "Sam Altman confirms AI-driven job displacement" (February 2026). Link

- Nature - "'An AlphaFold 4': scientists marvel at DeepMind drug spin-off's exclusive new AI" (February 2026). Link

- Google Blog - "Google Gemma AI Cancer Therapy Discovery" (October 2025). Link

- Google DeepMind - "AlphaEvolve: A Gemini-powered coding agent for designing advanced algorithms" (May 2025). Link

- Nature - "Scaling deep learning for materials discovery" (November 2023). Link